Boring is an investment. Exciting is a bill.

The calmest PostgreSQL deployments in production share one trait. They are boring. Pages stay quiet. Dashboards stay green. The on-call engineer reads a book on Tuesday night. And the people running those databases will tell you, plainly, that boring is the achievement.

Think about flying for a minute. The flight everyone wants is the one where the captain says hello, the meal shows up on time, and a few hours later, the wheels touch down in the right city. That flight is boring. It is also a small miracle. Behind that boring flight sits decades of compounded discipline. Pilots with thousands of simulator hours. Mechanics with checklists that they have run a hundred times. Air traffic controllers, weather systems, redundant hydraulics, and post-incident reviews that the entire industry reads and learns from. The passenger experiences calm. Everyone else earns it.

A production database deserves the same lens.

What it takes to keep flights boring

A boring flight is the visible tip of an enormous iceberg of effort. Certifications get renewed. Engines get inspected on a schedule. Manuals get updated the moment something new is learned. Trainees fly with senior captains for years before they sit alone in the left seat. When something does go wrong somewhere in the world, the report becomes required reading for every operator in the industry.

This is the part most people forget when they admire how safe air travel has become. Safety is a continuous investment. The moment an airline starts cutting corners on the boring parts, the flights stop being boring.

Apply the same lens to PostgreSQL

Once you accept that boring is the goal, the operational picture rearranges itself.

A boring PostgreSQL deployment is one where autovacuum is tuned to the workload, replication lag stays inside a known band, backups get restored on a rehearsed cadence, parameters reflect the current workload, monitoring has baselines, capacity gets planned ahead of growth, schemas get reviewed before they ship, and the on-call runbook has been used in practice rather than written and forgotten.

Each item on that list is unglamorous on its own. Together, they are what calm looks like.

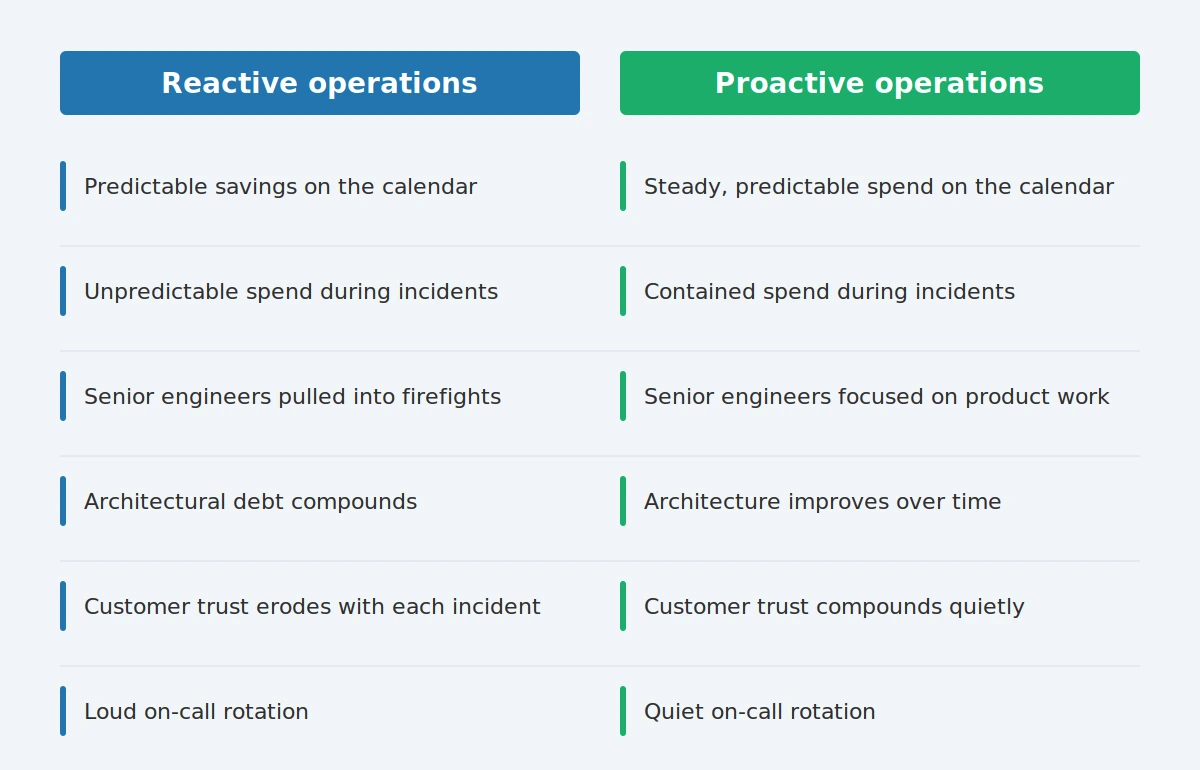

The cost math, told as two stories

Picture two organizations running similar PostgreSQL workloads.

The first one runs lean. The team is sharp, the database mostly behaves, and the budget for proactive work stays small. Then, one Friday afternoon, a long-running query holds a lock that backs up the connection pool. The application slows, then stalls. Four engineers get pulled in. The CTO joins the bridge. A customer commitment slips. By Monday morning, the team has restored service and written a postmortem that recommends six pieces of preventive work, most of which will wait, because the next incident is already forming on the horizon. The savings on the calendar get paid back, with interest, in a single afternoon.

The second organization spends predictably on the boring work. A senior PostgreSQL engineer reviews the slow query log every week. Quarterly health checks catch the index that was about to become a problem. Autovacuum settings get revisited when the write pattern shifts. The on-call rotation handles maybe one minor page a month, often during business hours, often resolved before the customer notices.

The total annual spend of the second organization is usually larger on the proactive side and dramatically smaller everywhere else. Lower incident frequency, shorter incident duration, fewer engineers pulled off product work, customer trust that compounds quietly, and an architecture that improves over time because the team has the bandwidth to improve it. The math, once you add the long tail of an incident (customer churn, engineering distraction, reputational drag, the meetings about the meetings), almost always favors the boring path.

Most operators already know this in their gut. The hard part is making the case for the spend before the next incident proves the point.

The heroism trap

Here is the part that tends to start arguments, so let me be direct about it.

Engineering culture celebrates the engineer who rescues production at 3am. The Slack channel fills with applause. The story gets told at the all-hands. The recovery becomes part of the legend.

Aviation does the opposite. The pilot who lands a plane after a fuel leak gets a medal, sure. The maintenance crew that would have caught the leak before takeoff gets the budget. The whole industry is organized to keep heroism rare because every act of heroism is also evidence that the system allowed something to go wrong.

Database operations would benefit from the same shift. A pager that rings often is a signal worth listening to. A team that has the recovery playbook memorized is a team whose database has been allowed to be exciting for too long. The goal is fewer heroes and more boring Tuesdays.

What “exciting” actually looks like

Before getting to the cadence, it helps to name the pattern. An exciting database has a few tells, and most teams running one will recognize at least three of them on sight.

The on-call rotation has a rhythm to it, and the rhythm is loud. Pages land in clusters, often during the same windows. The team has unofficial nicknames for the recurring offenders, the query that always shows up at month-end, the job that backs everything up on Sunday nights, the dashboard that stops updating once a quarter. Everyone knows the workarounds. Nobody has had time to fix the underlying causes.

Performance moves around without warning. A query that ran in 200 milliseconds last week takes four seconds today, and nobody has a clean answer for why. The team reaches for the same handful of explanations (statistics, autovacuum, a noisy neighbor) and tries them in order until one works. The fix gets applied. The root cause stays unconfirmed. The same symptom returns in six weeks, and the cycle starts over.

Senior engineers spend a meaningful slice of their week in incident channels. The product roadmap slips quietly, one Friday afternoon at a time. A planned feature gets pushed because the team that was supposed to build it spent three days last sprint chasing a replication lag that turned out to be a long-running analytics query nobody had documented.

Backups exist, but the last full restore drill happened a long time ago. The team is fairly sure the backups would work in a real disaster. Fairly sure is a hard thing to say out loud, so it tends to stay unsaid.

Architectural decisions get deferred because there is no bandwidth for them. The schema change that would simplify three downstream systems waits another quarter. The partitioning strategy that would calm down vacuum waits another quarter. Each deferral is reasonable on its own. Together, they form a slow drift toward more excitement.

If two or three of those patterns sound familiar, the database has been allowed to be exciting for a while. That recognition is the moment the cost math from earlier in this post stops being abstract. The bill is already being paid. The question is what to do about it.

What boring on purpose looks like, week to week

A practical rhythm helps. The cadence becomes the product.

Daily, the team reviews signals against baselines and triages alert noise so that real signals stay loud. Weekly, slow query trends, bloat health, and replication lag patterns get a once-over. Monthly, the team rehearses a backup restore on real data, reviews capacity against actual growth, and sanity-checks parameters against the current workload. Quarterly, a full Performance Health Check looks at the whole system rather than its symptoms. Yearly, the team executes a real failover, planned, supervised, and treated as a learning event.

All of that is ordinary work. All of it is the work that keeps the database boring.

Why this matters for your organization

PostgreSQL is one of the most capable databases ever built. In skilled hands, run with discipline, it carries enormous workloads quietly for years. The organizations getting that experience are the ones that have decided, on purpose, to invest in the boring parts. Deep PostgreSQL expertise, applied steadily, so the database becomes a part of the stack that the rest of the company stops thinking about. That quiet is the goal. It is also the achievement.